Using AI for research - A Practical Comparison of Six Popular Tools

In a nutshell

A review of six AI apps used for research reveals results ranging from “a useful starting point” to “completely misleading”

AI output will improve but presently should be treated as a starting point only – not accepted at face value

The results from one tool raise questions about the quality of peer-reviewed academic literature

I’ve been using the X AI app Grok regularly as a search engine substitute, to help with research and as a first-pass editor of my articles. Recently, I decided to run a simple comparison of Grok against five other popular AI tools for the purpose of conducting research.

I chose a subject I know reasonably well – the difference between raw milk intended for direct human consumption and intended for pasteurization. I asked each AI the same two questions:

What is the difference between raw milk intended for human consumption and that intended for pasteurization?

Differences between UK and US?

Results and recommendations are provided below.

Questions asked and answered

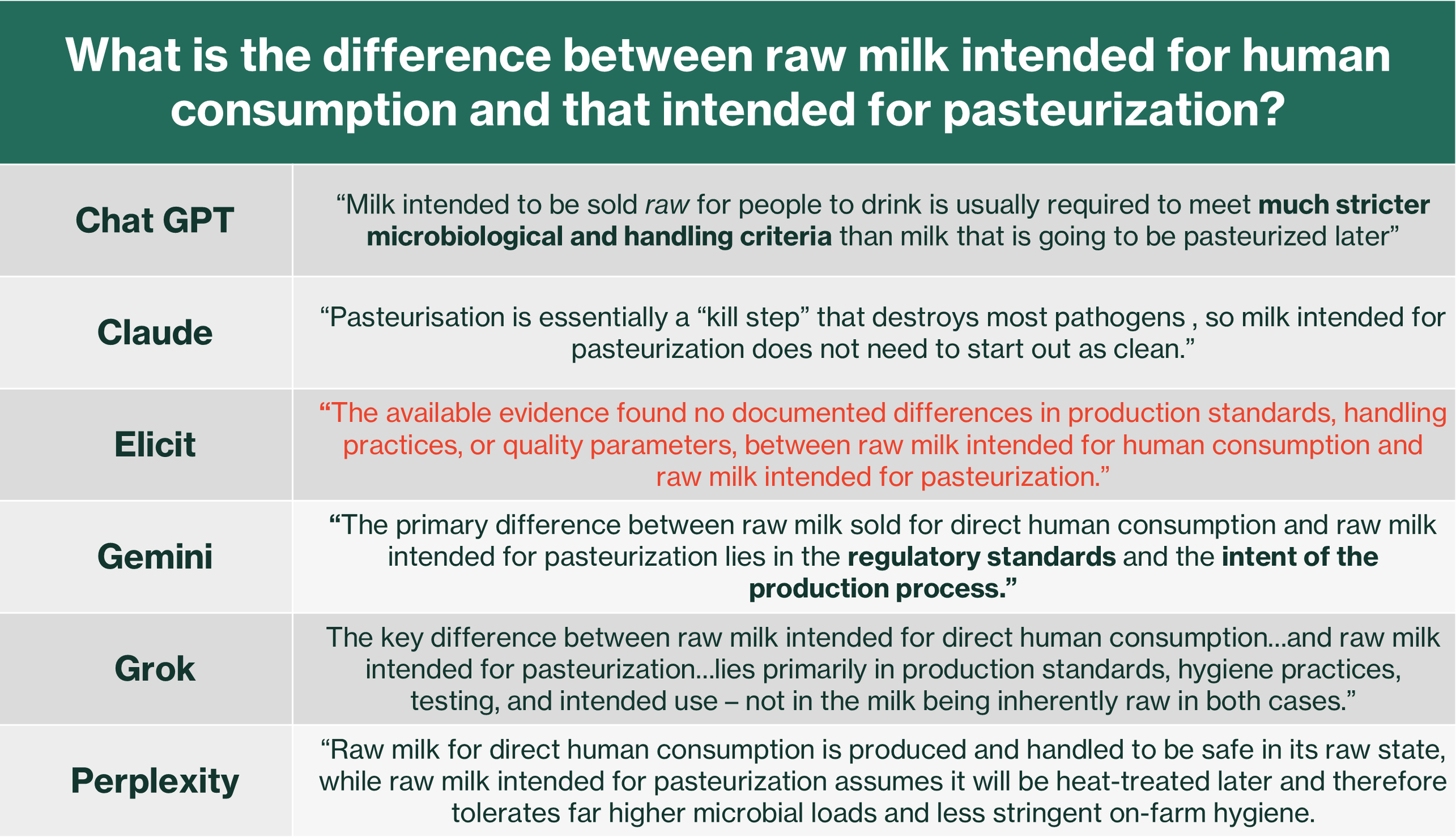

I’ve summarized the high-level points from each AI’s response to the first question in Table 1.

Table 1: A representative summary of answers to the main question

I was looking for clear, accurate descriptions of the practical differences plus any helpful risk-related analogies:

Grok and Perplexity - the clearest and most detailed answers

ChatGPT and Gemini - somewhat vague and “wishy-washy”

Elicit - essentially useless

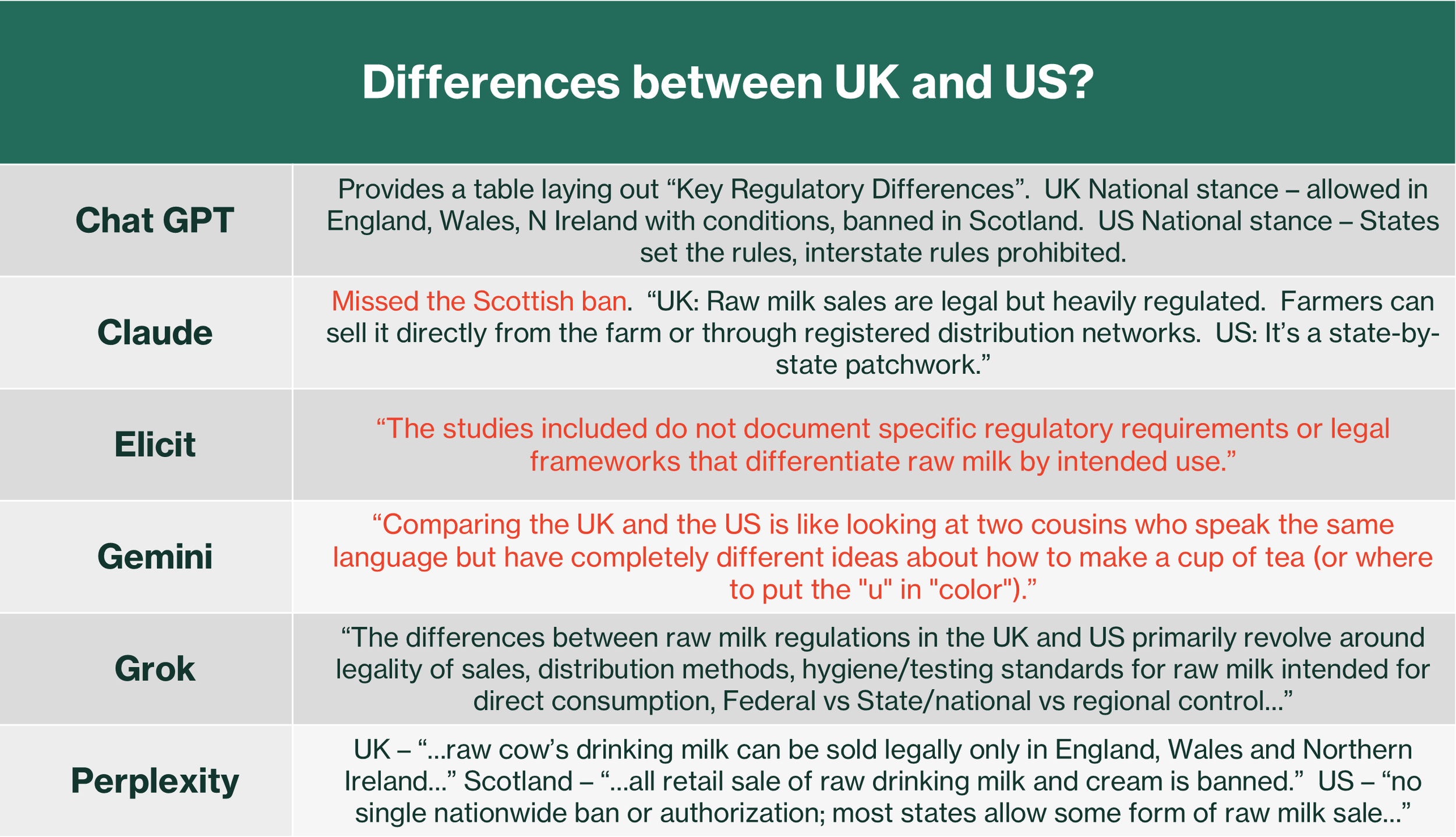

Responses to the follow-up question (UK vs. US differences, in the context of the original topic) are summarized in Table 2.

Table 2: A representative summary of answers to the follow-up question

I evaluated clarity and usefulness. I also evaluated how well the AI maintained context from the first question.

Grok and Perplexity – again the most useful and comprehensive

ChatGPT - somewhat useful but a little dry and less detailed

Claude - missed an important fact - the sale and distribution of raw milk is banned in Scotland

Elicit - no use because the academic papers it drew from didn’t address the issue

Gemini – unintentionally funny - completely missed the milk context and instead described broader cultural differences between the UK and US

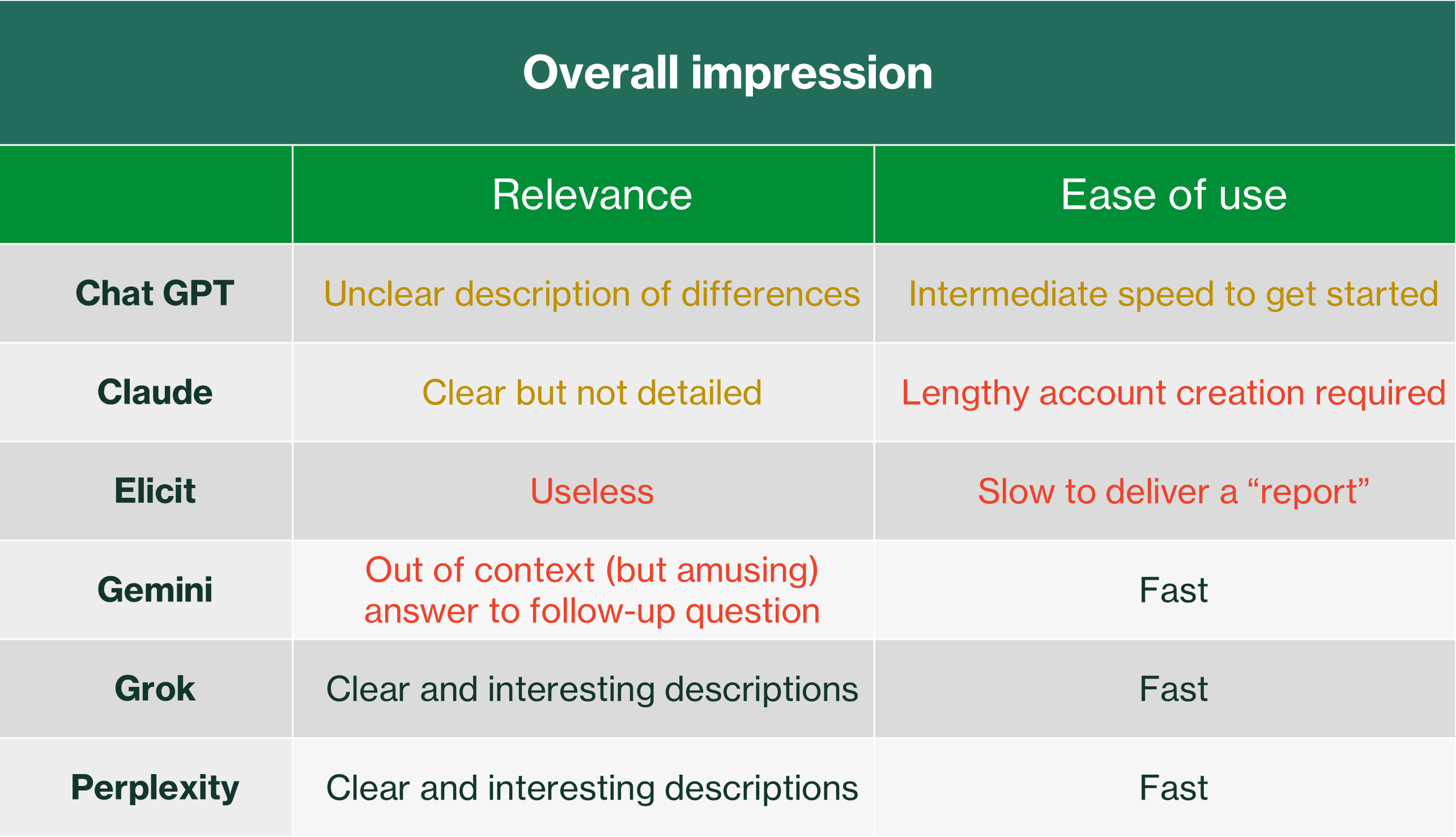

Overall impressions and recommendations for use

My overall impressions are summarized in Table 3.

Table 3: Overall impressions of the six AI tools

My recommendations are:

Grok and Perplexity – my two top choices – good starting points for research

ChatGPT – next best but with reservations

Gemini – I’m unlikely to use given its contextual blindness

Elicit – use only with extreme caution (discussed below)

Summary

My experience with six popular AI apps revealed a broad range of capability from genuinely useful (Grok and Perplexity) to very misleading (Elicit). Gemini provided an element of unintended humour in its quite misleading output.

I’m not completely surprised by these results. I went into the experiment with the view that AI tools are helpful but not yet fully reliable. I use Grok regularly for initial exploration of topics and as a first-pass editor, but I never accept its output without checking references and performing a third edit, respectively.

I was surprised, however, by Gemini’s lack of context and Elicit’s downright misdirection. The Elicit case is especially interesting because it relies primarily on published academic literature for its source material. When I asked the follow-up question “Did any of the cited references differentiate between raw milk intended for direct human consumption and that intended for pasteurization?”, I received the following answer:

“No, none of the cited references effectively differentiated between raw milk intended for direct human consumption and that intended for pasteurization”

Remember, Elicit’s answer to my original question (Table 1):

“The available evidence found no documented differences in production standards, handling practices, or quality parameters, between raw milk intended for human consumption and raw milk intended for pasteurization.”

This disconnect highlights an important point - the sometimes severe limitations of what gets published - or not - in peer-reviewed academic literature. Even tools designed to search scientific papers may generate overconfident first-pass conclusions when the underlying research is not well defined.

My concluding thought - AI can dramatically speed up research and idea exploration and I’m glad we have it because it will get better and better. However, presently it is simply a powerful assistant, not an authority. Always verify and think critically.